The Last Pull

Navigating the knowns and unknowns of life

You Walk Into a Casino...

It’s your last night in Vegas. You have an hour before you head to the airport and $100 to spend. In front of you are 5 identical slot machines. Each has a different, unknown payout. You put $5 into the first machine. Pull the lever. Nothing. You try the second machine. Jackpot! $15 flows out. Now what?

Do you stick with the machine that just paid out? Or do you explore the other three machines you haven't tried yet? What if Machine 2 was lucky, and Machine 4 is the real goldmine? But what if you waste money exploring bad machines when you could be cashing in on 2?

This is a fundamental problem in learning and decision making where you have to make a choice: to explore or to exploit? This tension between playing it safe with what you know works and risking it all to discover something better is how most intelligent systems learn.

The Heart of Learning

That exact feeling as you stand in front of those 5 machines is the exploration vs. exploitation dilemma.

Exploitation means doubling down on what you know works. Stick with Machine 2. Play it safe. Maximize your short-term returns based on current knowledge.

Exploration means venturing into the unknown. Try Machines 3, 4, and 5. Accept short-term losses for the possibility of long-term gains. Gather information that could change your course of action.

I face this dilemma everywhere. Should I get the tried and tested Pad Kee Mao, or should I venture out and try the Massaman Curry. But life diverges from the casino floor or the Thai restaurant. You can spread your bets, pull multiple machines and lose a few dollars learning or compensate a bad entrée with an appetizer. In most life decisions you only get one pull that could change everything. Sometimes you’re wagering months and years of time that you will never get back on a single decision with an unknown payoff. The crux of it remains the same, with limited information you need to make decisions that maximize your long-term payout.

From Slot Machines To Algorithms

Hypothetically, you played the game with a logbook of all the past pulls with their exact payouts at every pull. With this complete dataset you could study the pattern and determine your exact strategy. This is an example of supervised learning where you have labeled data and you attempt to generalize your strategy by finding patterns and you know the right/wrong action to make. This is a full information problem.

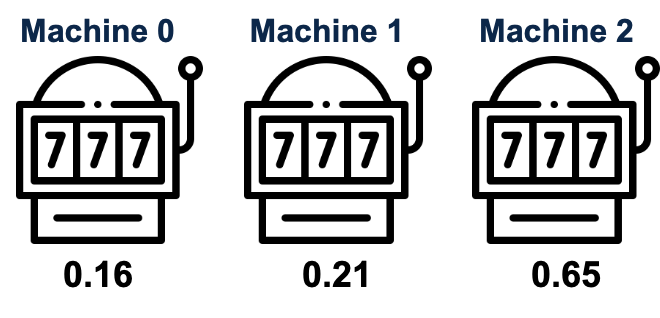

But the traditional casino dilemma has a more formal name, the Multi-Armed Bandit1 problem. Each slot machine is a one-armed bandit and you have multiple arms to pull. Each machine has an unknown payout rate and every pull costs you an initial buy-in.

Your goal is to maximize your cumulative reward over multiple pulls and you gather information about each machines only when you pull it. But what if the machines weren't identical? What if you could see that Machine 1 is in a high foot traffic area, Machine 2 has been recently serviced, whereas Machine 3 is tucked to a corner? This is the contextual bandit problem2. You now have additional information about each choice that could help you make an informed decision. The same exploration vs. exploitation tradeoff remains, but you have a little more information to work with. This is a partial information problem. You only find out the reward for the action you actually chose. You get no information about what reward you would have received if you had chosen a different action. If you want to know how this could be used in practice, here’s a great technical blog on how DoorDash uses Multi-Armed Bandits with Thompson Sampling to Identify Responsive Dashers.3

A More Sophisticated Form Of Learning

This is the perfect introduction to reinforcement learning. In RL, you are the agent navigating an environment. You take actions and receive rewards based on said actions. Your goal is to a learn a policy that maximizes your cumulative reward over time.

The casino is the environment and you get information about each machine based on your state. Choosing which machine to pull is your action, the payout you get is your reward, and your strategy to decide which machine to pull next is your policy. What makes this a learning problem is that you start with virtually no information about the environment and have to gather information as you perform actions and interact with the environment, moving from state to state. This is different from the other types of learning where you don’t have a way of getting the right answer or label, and you’re learning through trial and error making decision with no information.

Let’s look at a few strategies, or as we call them policies, that help us with our dilemma.

Strategy 1: The ε-Greedy Gambler

The epsilon-greedy algorithm gives you a simple approach: most of the time pull on your best performing machine but occasionally take a random chance on a different one.

Pick a small probability ε, let’s say 0.1. 90% of the time, we exploit our best performing machine. But, 10% of the time, we explore and randomly pick a different machine. This way you get to enjoy the guaranteed reward of your current best performing machine, while still getting some information on the others. The 10% spent on exploration isn’t wasted money. It’s the cost of information.

The epsilon parameter is a dial to tune your strategy. Set ε = 0 and you become purely greedy with no exploration. Set ε = 1 and you become purely random with constant exploration but no exploitation of what you've learned. The sweet spot is somewhere in between.

Strategy 2: The Optimistic Gambler

There’s a slightly smarter way to explore by being optimistic about uncertainty. We refer to this as the Upper Confidence Bound algorithm. Instead of random exploration, we attempt to be optimistic about machines we don’t know much about. In other words, the less information we have about a machine, the more optimistic we’ll be about its potential.

Let’s say that every machine has some payout rate with a confidence interval around it. A machine we have pulled a dozen times has a narrow confidence interval, now that we have enough information about its payout. Whereas a machine that is pulled only once or twice has a very wide confidence interval. This algorithm always picks the machine with the highest upper confidence bound. This is exploration with purpose. Instead of randomly trying machines you already know are bad, we focus our exploration towards the unknown.

Standing at Crossroads

The exploration vs. exploitation dilemma describes the fundamentals of intelligence. To learn, you must be willing to temporarily perform worse than your current best strategy and pay the price for information. The epsilon greedy gambler who occasionally tries new machines might lose money on a particular exploratory pull, but over time that exploration leads to better opportunities. The optimistic player who is optimistic about uncertainty might seem foolishly hopeful, but that optimism is mathematically justified. Pure exploitation means you'll never discover anything better than what you currently know. Pure exploration means you'll never benefit from what you've already learned.

The casino was never about the money but rather about the deeper question: How do you learn to make better decisions when you don't know what you don't know?

But the true deeper questions are when you start applying this to life while you navigate the knowns and unknowns and realize that life’s most crucial decisions cannot be hedged. It forces you to make a change of policy that could alter your course of life. Unlike the optimistic gambler with infinite quarters, you only have a finite stack of chips where every exploratory bet determines everything that follows. The cost of an action is an unlived life.

You don’t know what you don’t know and you only know what you know. What do you do? Do you explore or do you exploit?

Credits

1 Lilian Weng’s Lil’Log for a lot of ideas

2 Yash Rathod for the blog suggestion

Personally, this is why I think that a slept on skill in life (especially early career/life) is being intentional about low-stakes ways to try different things out. Especially when what you are testing is a mini version of some life decision that will be lasting (e.g. what career to go into, who to settle with, etc.)

RL has been (for me) the only technical topic where I can read academic papers that tackle purely technical problems and still make me think about my life philosophy. Great piece.